Here are a few examples to get you started!

In the examples folder, you will also find example models for real datasets:

- CIFAR10 small images classification: Convolutional Neural Network (CNN) with realtime data augmentation

- IMDB movie review sentiment classification: LSTM over sequences of words

- Reuters newswires topic classification: Multilayer Perceptron (MLP)

- MNIST handwritten digits classification: MLP & CNN

- Character-level text generation with LSTM

...and more.

Multilayer Perceptron (MLP) for multi-class softmax classification:

from keras.models import Sequential

from keras.layers import Dense, Dropout, Activation

from keras.optimizers import SGD

model = Sequential()

# Dense(64) is a fully-connected layer with 64 hidden units.

# in the first layer, you must specify the expected input data shape:

# here, 20-dimensional vectors.

model.add(Dense(64, input_dim=20, init='uniform'))

model.add(Activation('tanh'))

model.add(Dropout(0.5))

model.add(Dense(64, init='uniform'))

model.add(Activation('tanh'))

model.add(Dropout(0.5))

model.add(Dense(10, init='uniform'))

model.add(Activation('softmax'))

sgd = SGD(lr=0.1, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy',

optimizer=sgd)

model.fit(X_train, y_train,

nb_epoch=20,

batch_size=16,

show_accuracy=True)

score = model.evaluate(X_test, y_test, batch_size=16)

Alternative implementation of a similar MLP:

model = Sequential()

model.add(Dense(64, input_dim=20, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(10, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='adadelta')

MLP for binary classification:

model = Sequential()

model.add(Dense(64, input_dim=20, init='uniform', activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(1, activation='sigmoid'))

model.compile(loss='binary_crossentropy',

optimizer='rmsprop')

VGG-like convnet:

from keras.models import Sequential

from keras.layers import Dense, Dropout, Activation, Flatten

from keras.layers import Convolution2D, MaxPooling2D

from keras.optimizers import SGD

model = Sequential()

# input: 100x100 images with 3 channels -> (3, 100, 100) tensors.

# this applies 32 convolution filters of size 3x3 each.

model.add(Convolution2D(32, 3, 3, border_mode='valid', input_shape=(3, 100, 100)))

model.add(Activation('relu'))

model.add(Convolution2D(32, 3, 3))

model.add(Activation('relu'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Convolution2D(64, 3, 3, border_mode='valid'))

model.add(Activation('relu'))

model.add(Convolution2D(64, 3, 3))

model.add(Activation('relu'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Flatten())

# Note: Keras does automatic shape inference.

model.add(Dense(256))

model.add(Activation('relu'))

model.add(Dropout(0.5))

model.add(Dense(10))

model.add(Activation('softmax'))

sgd = SGD(lr=0.1, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd)

model.fit(X_train, Y_train, batch_size=32, nb_epoch=1)

Sequence classification with LSTM:

from keras.models import Sequential

from keras.layers import Dense, Dropout, Activation

from keras.layers import Embedding

from keras.layers import LSTM

model = Sequential()

model.add(Embedding(max_features, 256, input_length=maxlen))

model.add(LSTM(output_dim=128, activation='sigmoid', inner_activation='hard_sigmoid'))

model.add(Dropout(0.5))

model.add(Dense(1))

model.add(Activation('sigmoid'))

model.compile(loss='binary_crossentropy', optimizer='rmsprop')

model.fit(X_train, Y_train, batch_size=16, nb_epoch=10)

score = model.evaluate(X_test, Y_test, batch_size=16)

Architecture for learning image captions with a convnet and a Gated Recurrent Unit:

(word-level embedding, caption of maximum length 16 words).

Note that getting this to work well will require using a bigger convnet, initialized with pre-trained weights.

max_caption_len = 16

vocab_size = 10000

# first, let's define an image model that

# will encode pictures into 128-dimensional vectors.

# it should be initialized with pre-trained weights.

image_model = Sequential()

image_model.add(Convolution2D(32, 3, 3, border_mode='valid', input_shape=(3, 100, 100)))

image_model.add(Activation('relu'))

image_model.add(Convolution2D(32, 3, 3))

image_model.add(Activation('relu'))

image_model.add(MaxPooling2D(pool_size=(2, 2)))

image_model.add(Convolution2D(64, 3, 3, border_mode='valid'))

image_model.add(Activation('relu'))

image_model.add(Convolution2D(64, 3, 3))

image_model.add(Activation('relu'))

image_model.add(MaxPooling2D(pool_size=(2, 2)))

image_model.add(Flatten())

image_model.add(Dense(128))

# let's load the weights from a save file.

image_model.load_weights('weight_file.h5')

# next, let's define a RNN model that encodes sequences of words

# into sequences of 128-dimensional word vectors.

language_model = Sequential()

language_model.add(Embedding(vocab_size, 256, input_length=max_caption_len))

language_model.add(GRU(output_dim=128, return_sequences=True))

language_model.add(TimeDistributedDense(128))

# let's repeat the image vector to turn it into a sequence.

image_model.add(RepeatVector(max_caption_len))

# the output of both models will be tensors of shape (samples, max_caption_len, 128).

# let's concatenate these 2 vector sequences.

model = Sequential()

model.add(Merge([image_model, language_model], mode='concat', concat_axis=-1))

# let's encode this vector sequence into a single vector

model.add(GRU(256, return_sequences=False))

# which will be used to compute a probability

# distribution over what the next word in the caption should be!

model.add(Dense(vocab_size))

model.add(Activation('softmax'))

model.compile(loss='categorical_crossentropy', optimizer='rmsprop')

# "images" is a numpy float array of shape (nb_samples, nb_channels=3, width, height).

# "captions" is a numpy integer array of shape (nb_samples, max_caption_len)

# containing word index sequences representing partial captions.

# "next_words" is a numpy float array of shape (nb_samples, vocab_size)

# containing a categorical encoding (0s and 1s) of the next word in the corresponding

# partial caption.

model.fit([images, partial_captions], next_words, batch_size=16, nb_epoch=100)

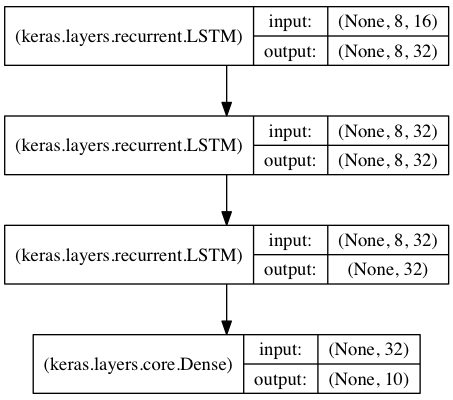

Stacked LSTM for sequence classification

In this model, we stack 3 LSTM layers on top of each other, making the model capable of learning higher-level temporal representations.

The first two LSTMs return their full output sequences, but the last one only returns the last step in its output sequence, thus dropping the temporal dimension (i.e. converting the input sequence into a single vector).

(N.B.: in Keras, "None" in an input shape indicates a variable dimension. In the graph above, the batch size is "None", meaning that any batch size is allowed for the input data).

from keras.models import Sequential

from keras.layers import LSTM, Dense

import numpy as np

data_dim = 16

timesteps = 8

nb_classes = 10

# expected input data shape: (batch_size, timesteps, data_dim)

model = Sequential()

model.add(LSTM(32, return_sequences=True,

input_shape=(timesteps, data_dim))) # returns a sequence of vectors of dimension 32

model.add(LSTM(32, return_sequences=True)) # returns a sequence of vectors of dimension 32

model.add(LSTM(32)) # return a single vector of dimension 32

model.add(Dense(10, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='rmsprop')

# generate dummy training data

x_train = np.random.random((1000, timesteps, data_dim))

y_train = np.random.random((1000, nb_classes))

# generate dummy validation data

x_val = np.random.random((100, timesteps, data_dim))

y_val = np.random.random((100, nb_classes))

model.fit(x_train, y_train,

batch_size=64, nb_epoch=5, show_accuracy=True,

validation_data=(x_val, y_val))

Same stacked LSTM model, rendered "stateful"

A stateful recurrent model is one for which the internal states (memories) obtained after processing a batch of samples are reused as initial states for the samples of the next batch. This allows to process longer sequences while keeping computational complexity manageable.

You can read more about stateful RNNs in the FAQ.

from keras.models import Sequential

from keras.layers import LSTM, Dense

import numpy as np

data_dim = 16

timesteps = 8

nb_classes = 10

batch_size = 32

# expected input batch shape: (batch_size, timesteps, data_dim)

# note that we have to provide the full batch_input_shape since the network is stateful.

# the sample of index i in batch k is the follow-up for the sample i in batch k-1.

model = Sequential()

model.add(LSTM(32, return_sequences=True, stateful=True,

batch_input_shape=(batch_size, timesteps, data_dim)))

model.add(LSTM(32, return_sequences=True, stateful=True))

model.add(LSTM(32, stateful=True))

model.add(Dense(10, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='rmsprop')

# generate dummy training data

x_train = np.random.random((batch_size * 10, timesteps, data_dim))

y_train = np.random.random((batch_size * 10, nb_classes))

# generate dummy validation data

x_val = np.random.random((batch_size * 3, timesteps, data_dim))

y_val = np.random.random((batch_size * 3, nb_classes))

model.fit(x_train, y_train,

batch_size=batch_size, nb_epoch=5, show_accuracy=True,

validation_data=(x_val, y_val))

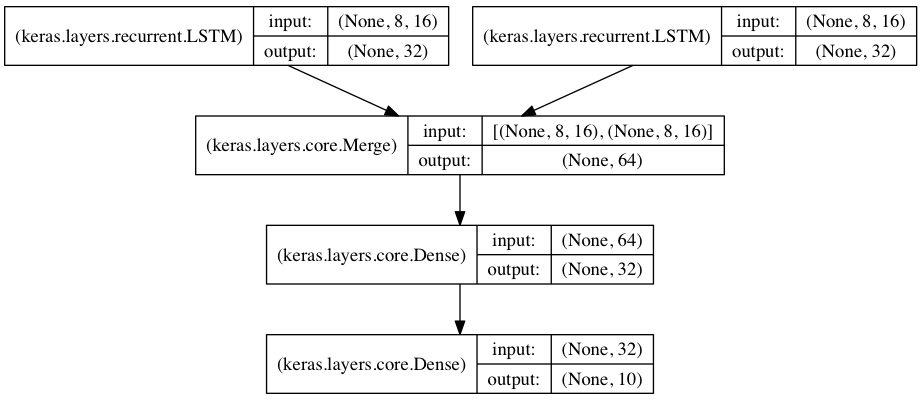

Two merged LSTM encoders for classification over two parallel sequences

In this model, two input sequences are encoded into vectors by two separate LSTM modules.

These two vectors are then concatenated, and a fully connected network is trained on top of the concatenated representations.

from keras.models import Sequential

from keras.layers import Merge, LSTM, Dense

import numpy as np

data_dim = 16

timesteps = 8

nb_classes = 10

encoder_a = Sequential()

encoder_a.add(LSTM(32, input_shape=(timesteps, data_dim)))

encoder_b = Sequential()

encoder_b.add(LSTM(32, input_shape=(timesteps, data_dim)))

decoder = Sequential()

decoder.add(Merge([encoder_a, encoder_b], mode='concat'))

decoder.add(Dense(32, activation='relu'))

decoder.add(Dense(nb_classes, activation='softmax'))

decoder.compile(loss='categorical_crossentropy', optimizer='rmsprop')

# generate dummy training data

x_train_a = np.random.random((1000, timesteps, data_dim))

x_train_b = np.random.random((1000, timesteps, data_dim))

y_train = np.random.random((1000, nb_classes))

# generate dummy validation data

x_val_a = np.random.random((100, timesteps, data_dim))

x_val_b = np.random.random((100, timesteps, data_dim))

y_val = np.random.random((100, nb_classes))

decoder.fit([x_train_a, x_train_b], y_train,

batch_size=64, nb_epoch=5, show_accuracy=True,

validation_data=([x_val_a, x_val_b], y_val))

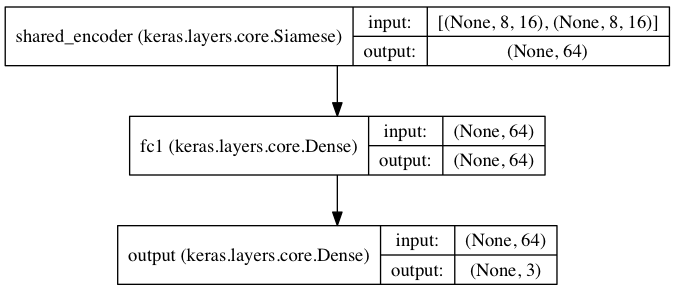

Single shared LSTM over two parallel sequences, for classification

This is a similar setup as above, but now a single LSTM encoder is used for both input sequences. Such a setup makes sense if the two input sequences are the same type of object.

from keras.models import Graph

from keras.layers import LSTM, Dense

import numpy as np

data_dim = 16

timesteps = 8

nb_classes = 10

encoder = Sequential()

encoder.add(LSTM(32, input_shape=(timesteps, data_dim)))

model = Graph()

model.add_input(name='input_a', input_shape=(timesteps, data_dim))

model.add_input(name='input_b', input_shape=(timesteps, data_dim))

model.add_shared_node(encoder, name='shared_encoder', inputs=['input_a', 'input_b'],

merge_mode='concat')

model.add_node(Dense(64, activation='relu'), name='fc1', input='shared_encoder')

model.add_node(Dense(3, activation='softmax'), name='output', input='fc1', create_output=True)

model.compile(optimizer='adam', loss={'output': 'categorical_crossentropy'})

# generate dummy training data

x_train_a = np.random.random((1000, timesteps, data_dim))

x_train_b = np.random.random((1000, timesteps, data_dim))

y_train = np.random.random((1000, 3))

# generate dummy validation data

x_val_a = np.random.random((100, timesteps, data_dim))

x_val_b = np.random.random((100, timesteps, data_dim))

y_val = np.random.random((100, 3))

model.fit({'input_a': x_train_a, 'input_b': x_train_b, 'output': y_train},

batch_size=64, nb_epoch=5,

validation_data={'input_a': x_val_a, 'input_b': x_val_b, 'output': y_val})